Connect Application to Spark Cluster through Databricks

The C3 Agentic AI Platform has a built-in connector for integrating with Databricks.

To connect to Databricks from your application:

- Add a SqlSourceSystem modeling the Databricks source system to your package.

- Configure the JdbcCredentials authorizing the connection to the external Databricks table.

- Add a SqlSourceCollection modeling the target Databricks table to your package.

- Create an External Type modeling the schema of the external Databricks table.

The following sections include detailed instructions for configuring the connection, using Azure Databricks as an example.

For more information, see the Databricks documentation on configuring the JDBC driver.

Model the source system

Create a SqlSourceSystem and set the name field as the identifier for the external database system.

For example, you can add the following DatabricksSourceSystem.json to the \metadata\SqlSourceSystem directory of your package:

{

"name": "DatabricksSourceSystem"

}Configure the credential used to authorize the JDBC connection

Create a JdbcCredentials Type instance to configure the connection to the external Databricks table, passing the following fields to the JdbcCredentials.fromServerEndpoint() method:

serverEndpoint— Databricks server hostnameport— 443datastoreType— Specifies that the JdbcCredentials authorizes a connection to Databricksdatabase— The name of the schema within the databaseschemaName— nullusername— the account with authorization to access the Databricks table; if using an access token, set totokenpassword— Databricks personal access token for your workspace userhttpPath— additional metadata required to make the JDBC connection

For example, run the following from console to configure the JdbcCredentials to connect to an Azure Databricks instance:

creds = JdbcCredentials.fromServerEndpoint("SERVER_HOSTNAME",

443,

DatastoreType.DATABRICKS,

"default",

"null",

"token",

"PERSONAL_ACCESS_TOKEN");

creds = creds.withField("properties", {"httpPath" : "HTTP_PATH"});

JdbcStore.forName("DatabricksSourceSystem").setCredentials(creds, ConfigOverride.APP);

JdbcStore.forName("DatabricksSourceSystem").setExternal(ConfigOverride.APP);Model the table containing the data

To model the external Databricks table in your application, create a SqlSourceCollection and set the following fields:

name: Identifier for the Databricks table source: Name of the External Type that models the schema of the external Databricks table sourceSystem: Name of the Databricks SqlSourceSystem

For example, to model a table called iris, you can add the following DatabricksIrisTable.json to the \metadata\SqlSourceCollection directory of your package:

{

"name" : "Iris",

"source" : "Iris",

"sourceSystem" : {

"type" : "SqlSourceSystem",

"name" : "DatabricksSourceSystem"

}

}Model the table schema

To model the schema of the Databricks table in your application, create an External Entity Type with a schema name that matches the name of the table in the Databricks exactly, at it is case-sensitive.

Start by adding the following Iris.c3typ file to the \src directory of your package:

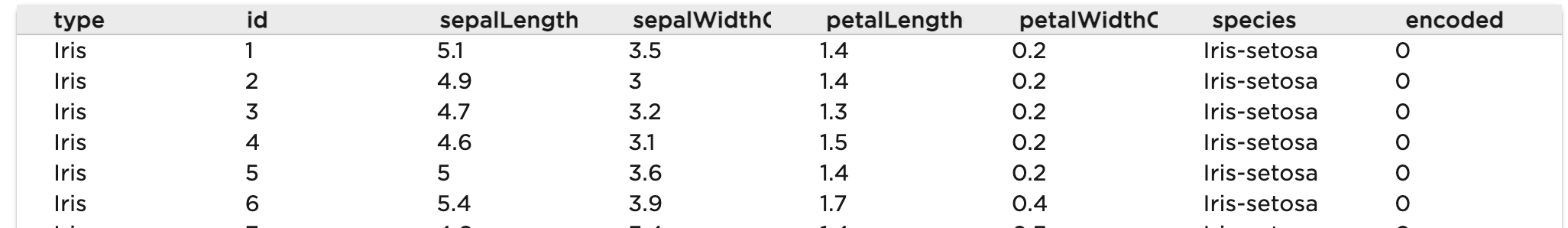

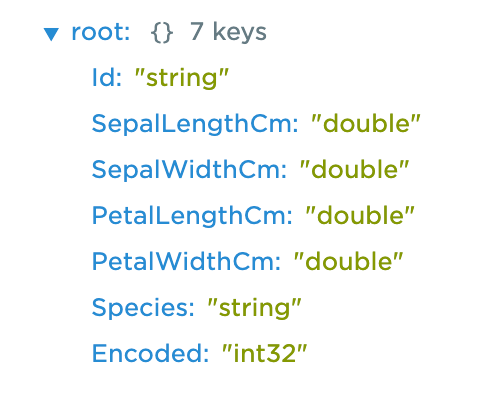

entity type Iris mixes External, NoSystemCols schema name 'default.iris'For the iris table, the schema name is a qualified name consisting of the schema name and table name separated by a dot. You can use the inferSourceType() method to access the C3 AI data types of the table, which the platform infers from the source data types.

var schema = SqlSourceCollection.forName("Iris").inferSourceType().declaredFieldTypes;

var myObject = {};

for (let i = 0; i < schema.length; i++) {

schemaName = schema[i].schemaName;

myObject[schemaName] = schema[i].valueType.name;

}

Azure Databricks data types are mapped to C3 AI Data Types according to the following table. See also PrimitiveType.

| Azure Databricks Data Types | C3 AI Data Types |

|---|---|

| TINYINT, SMALLINT, INT, BIGINT | int, int16, int32 |

| DECIMAL | decimal |

| FLOAT | float |

| DOUBLE | double |

| DATE, TIMESTAMP, TIMESTAMP_NTZ | datetime |

| BINARY | binary |

| BOOLEAN | boolean |

| INTERVAL | Not Supported |

| STRING | string |

| VOID | Not Supported |

| ARRAY, MAP, STRUCT | json |

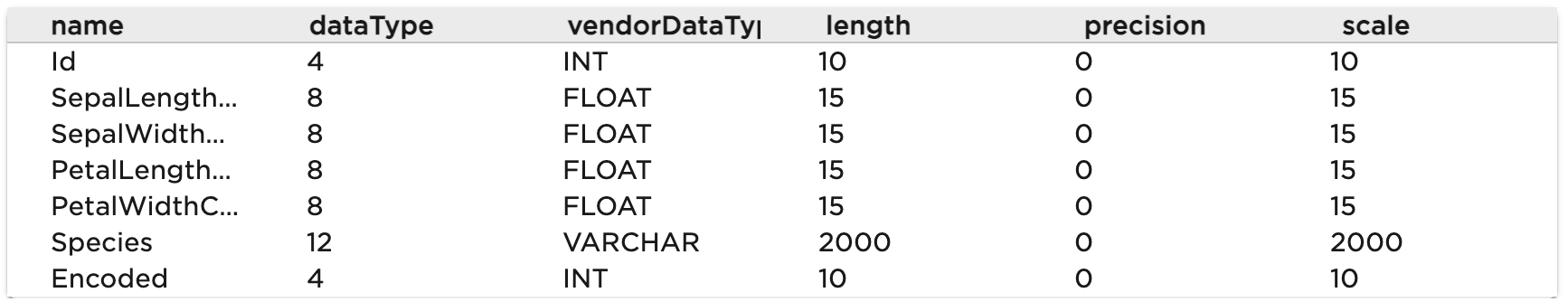

You can also access the source data types to validate the type inference:

SqlSourceCollection.forName("Iris").connect().columns;

Complete the External Entity Type definition:

entity type Iris mixes External, NoSystemCols schema name 'default.iris' {

id: ~ schema name "Id"

sepalLengthCm: double schema name "SepalLengthCm"

sepalWidthCm: double schema name "SepalWidthCm"

petalLengthCm: double schema name "PetalLengthCm"

petalWidthCm: double schema name "PetalWidthCm"

species: string schema name "Species"

encoded: int schema name "Encoded"

}Note that the id field is required. If your table does not have a column called id, you can change the schema name for the corresponding id field with the following annotation:

@db(dataTypeOverride="ID_FIELD_DATA_TYPE")

id: ~ schema name "ID_FIELD"Read data from the table

After setting the credential, you can validate the configuration by fetching the External Type data from the Databricks table:

c3Grid(Iris.fetch());