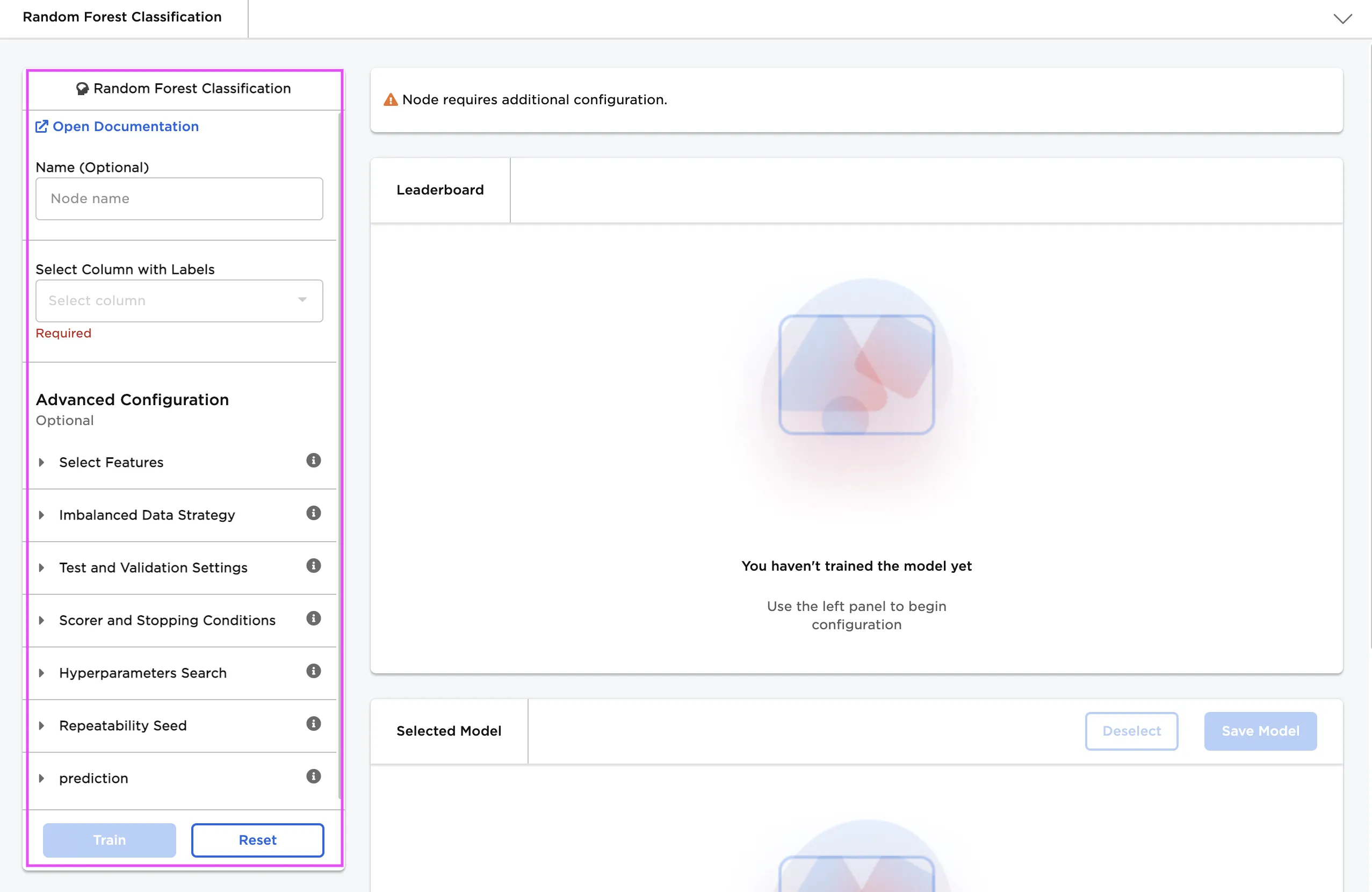

Random Forest Classification

Categorize data using a random forest classification model in C3 AI Ex Machina. Random forest models consist of many trees that classify data using a majority-vote approach. To learn more about random forest models, see the C3 AI glossary.

Configuration

| Field | Description |

|---|---|

| Namedefault=none | Name of the nodeA user-specified node name displayed in the workspace, both on the node and in the dataframe as a tab. |

| Select Column with Labels*Required | The column the random forest classifier should predictSelect a column from the dropdown menu. This column contains the labels that the model should be able to predict after the training. |

Advanced Configuration

Optionally alter the advanced configuration fields to control the output of the node.

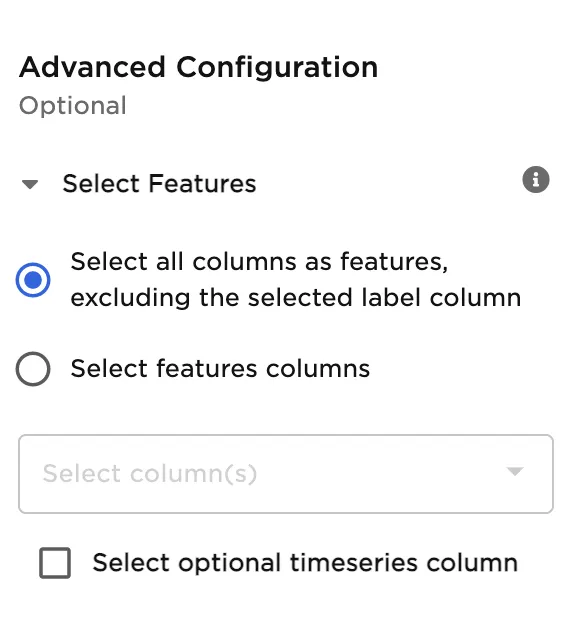

Select Features

| Field | Description |

|---|---|

Select Featuresdefault=Select all columns as features, excluding the selected label column | Features to train the model withUse all columns as features, or select specific columns using the dropdown menu. Columns selected as features are used to train the model. |

Select optional timeseries columndefault=Off | Timeseries columnIf there is a timeseries column in your data, check the box in this field and select the timeseries column from the dropdown menu. Timeseries information is used when splitting the data into separate train, validation, and test datasets. |

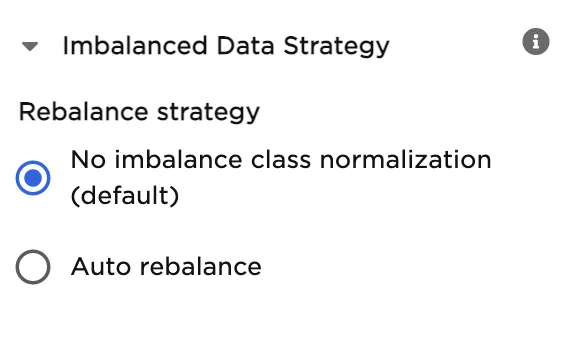

Imbalanced Data Strategy

Classification models expect data to be somewhat balanced. If one label in your dataset is severely underrepresented, models might ignore that label during training and always predict a more prevalent label. Since the minority label represents such a small portion of the data, models can use this strategy without generating a significant number of false predictions. To prevent this behavior, Ex Machina balances the data so all labels are evenly represented.

| Field | Description |

|---|---|

Rebalance strategydefault=No imbalance class normalization | Rebalance optionsSelect Auto rebalance to automatically balance the data so all labels are evenly represented. Leave No imbalance class normalization selected to leave the input data unchanged. |

Test and Validation Settings

When training models, data is split into multiple components. The bulk of the data is used for training and validation, while a small portion is set aside for testing. The fields in this section determine what percentage of the data is used for training, how the data is used during the training process, and the strategy used to split the data.

| Field | Description |

|---|---|

Select test and validation methoddefault=Train-validation-split | Test and train methodSelect Train-validation-split to split the dataset into separate train, validation, and test datasets. Select Cross-validation to split the data into a specified number of subsets. During training, one subgroup is used for testing and validation, while the other subgroups are used for training. The process is then repeated so each subgroup is used as the testing and validation group once. |

Select percentage splitdefault=Train: 70%, Validation: 15%, Test: 15% | Data split percentageMove the slider to split the data into test, validation, and train datasets. If Cross-validation is selected in the Select test and validation method field, move the slider to split the data into a train dataset that will be divided into subgroups, and a separate testing dataset. The default split when using the cross-validation method is 80% train and 20% test. |

Select number of cross-validation foldsdefault=6 | Number of cross-validation subgroupsEnter a number between 2 and 20. The data allocated for training is divided into the specified number of subgroups. |

Select sampling methoddefault=Stratified | Data splitting strategySelect Stratified to ensure that each dataset and subgroup contains the same percentage of each label as the entire dataset. Select Random to randomly split the data into the percentages specified above. Note that selecting Random may result in test data that doesn't accurately represent the entire dataset. |

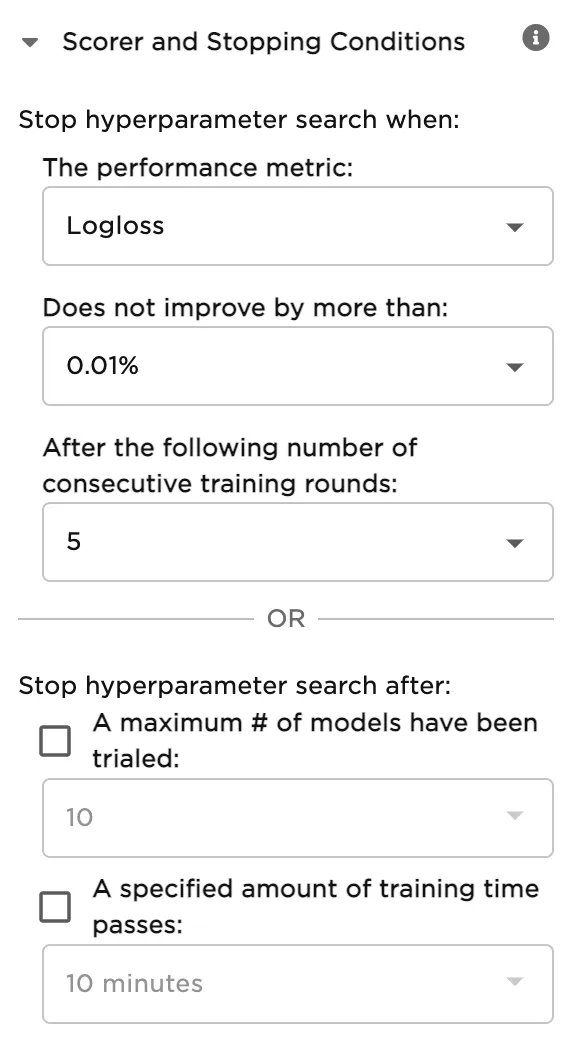

Scorer and Stopping Conditions

By default, Ex Machina trains many models with different hyperparameter configurations, then ranks the models by performance. The fields in this section tell Ex Machina when to stop making new models. You can stop making models once the new models no longer substantially improve upon the existing models. Alternatively, you can stop making new models after a specified number of models have been trained or a certain amount of time has passed.

| Field | Description |

|---|---|

The performance metricdefault=Logloss | The performance metric used to stop hyperparameter searchSelect Logloss, AuROC, AuPR, MSE, or RMSE. When training multiple models with different hyperparameter combinations, stop creating models when the new models fail to improve a specified performance metric. This field is used in conjunction with the following two fields. |

Does not improve by more thandefault=0.01% | The specific threshold used to stop hyperparameter searchSelect 0.1%, 0.01%, 0.001%, or 0.0001%. When training multiple models with different hyperparameter combinations, stop creating models when the new models fail to improve the specified performance metric by the given percentage. This field is used in conjunction with the fields directly above and below. |

After the following number of consecutive training roundsdefault=5 | The criteria used to stop hyperparameter searchSelect a number between 2 and 10. When training multiple models with different hyperparameter combinations, stop creating models when the new models fail to improve the specified performance metric after the following number of consecutive training rounds. This field is used in conjunction with the two fields above. |

A maximum # of models have been trialeddefault=10 | How many models to trainSelect 3, 5, 10, 20, 50, 100, 200, or 500. When training multiple models with different hyperparameter combinations, stop creating new models after a specified number of models are created. |

A specified amount of training time passesdefault=10 minutes | When to stop training new modelsSelect 5 minutes, 10 minutes, 20 minutes, 30 minutes, 1 hour, 2 hours, 12 hours, or 24 hours. When training multiple models with different hyperparameter combinations, stop creating new models after a specified amount of time passes. |

Hyperparameters Search

As mentioned in the previous section, Ex Machina trains many different models with various hyperparameter combinations. The fields in this section determine the hyperparameter options used during training. Although you don't need to alter these fields to train a high-performing model, it can be interesting to explore different combinations.

Hyperparameters give you precise control over a model. You can use these to tell the model how quickly to learn, when to stop improving, and what to prioritize during the learning process. In general, the goal of changing the hyperparameters is to make the best possible model while avoiding overfitting. A model is considered overfit when it is too closely aligned to the training data to produce accurate predictions on unseen data.

| Field | Description |

|---|---|

Hyperparameters Searchdefault=Search | Train one model or multiple modelsSelect Search to train multiple models with different hyperparameter combinations and then compare the models to find the best one. Select Fixed to train a single model with a fixed hyperparameter configuration. |

Number of Trees in Forestdefault=20, 50, 100, 150, 200 | The number of trees to buildEnter an integer between 1 and 10,000. More trees create a more accurate model, but can lead to overfitting. Values between 50 and 200 are common. If you define a fixed model, the default is 50. |

Maximum Tree Depthdefault=4, 8, 12, 16, 20, 40 | The maximum number of levels in each treeEnter an integer between 0 and 100. Setting this value to 0 specifies no limit. Increasing the tree depth allows the model to fine-tune its performance, but may lead to overfitting. If you define a fixed model, the default is 20. |

Maximum Number of Binsdefault=16, 32, 64, 128, 256, 512 | How many data points are in a leaf node Specify the maximum number of bins. Increasing this value makes the model more accurate, but may result in overfitting. If you define a fixed model, the default is 32. |

Number of Bin Categoriesdefault=128, 256, 512, 1024, 2048, 4096 | Number of bins for categorical dataSpecify the maximum number of bins for categorical data. Increasing this value makes the model more accurate, but can result in overfitting and increased runtime. If you define a fixed model, the default is 1024. |

Number of Bins Top Leveldefault=128, 256, 512, 1024, 2048, 4096 | Minimum number of bins Specify the minimum number of bins at the root level. This number is decreased by a factor of two at each new level. If you define a fixed model, the default is 1024. |

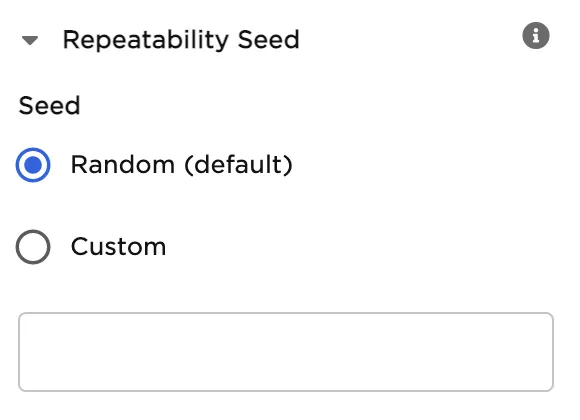

Repeatability Seed

Random numbers are used throughout the training process for splitting the original dataset, splitting individual trees, and optimizing hyperparameters. Ex Machina uses one number, called a seed, to generate those random numbers. The field in this section allows you to enter a custom seed. If you enter a custom seed, you can enter that same custom seed at a later date to reproduce the results of the training.

| Field | Description |

|---|---|

Seeddefault=Random | The number used throughout the AutoML processSelect Random to use a random number, or select Custom to enter a specific integer. |

Prediction

The output of this node is each model's predictions on the training data. This section determines how the predictions are portrayed in the resulting dataframe.

| Field | Description |

|---|---|

| modelIddefault=model name | Selected model's nameThis auto-populated field displays the selected model's name. |

Prediction Column Namedefault=prediction | The column name for the model's predictionsEnter a name for the column that contains the selected model's predictions. Column names can contain alphanumeric characters and underscores, but cannot contain spaces. |

Add column with probabilistic output scoresdefault=On | Model prediction probabilitiesLeave this switch on to create a column with the model's confidence in each prediction. Toggle this switch off to create a dataframe without this column. |

Dataset Selectiondefault=Train Dataset | Data used to display a model's predictionsSelect All Data, Train Dataset, Validation Dataset, or Test Dataset. Ex Machina displays a selected model's predictions on the dataset selected with this field. If you select Cross-validation for the Select test and validation method field, the Validation Dataset option is unavailable. |

Node Inputs/Outputs

| Input | A C3 AI Ex Machina dataframe |

|---|---|

| Output | Trained random forest classification models and a dataframe with predictions on the training data |

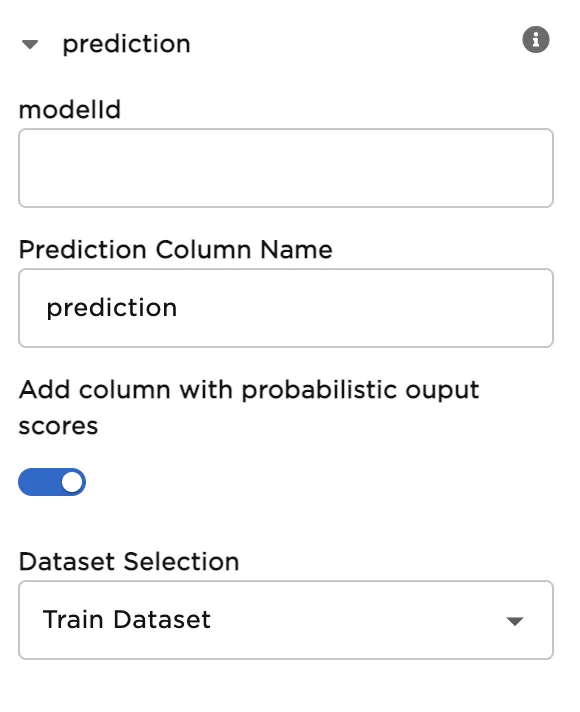

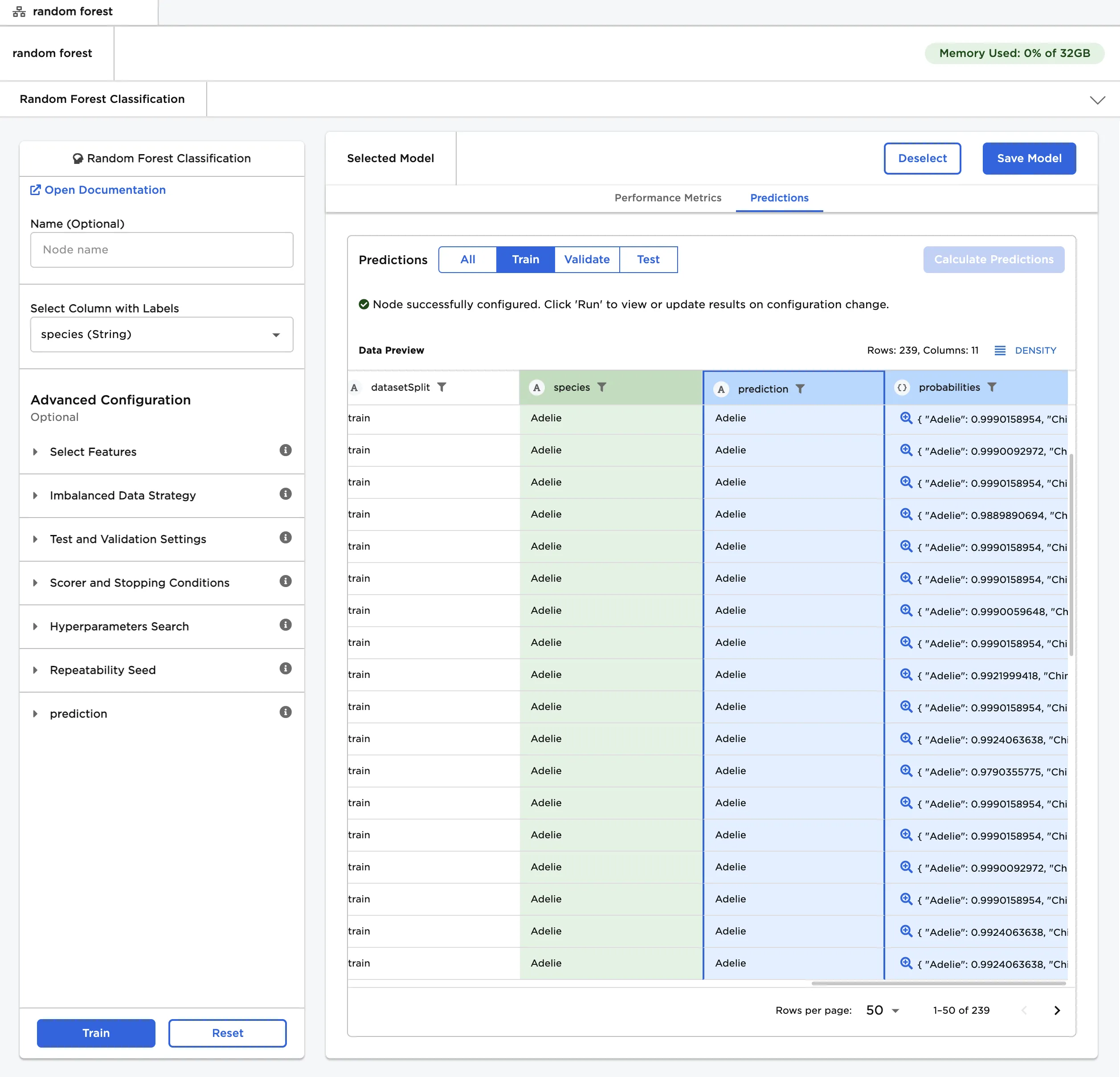

Figure 1: Example output

Examples

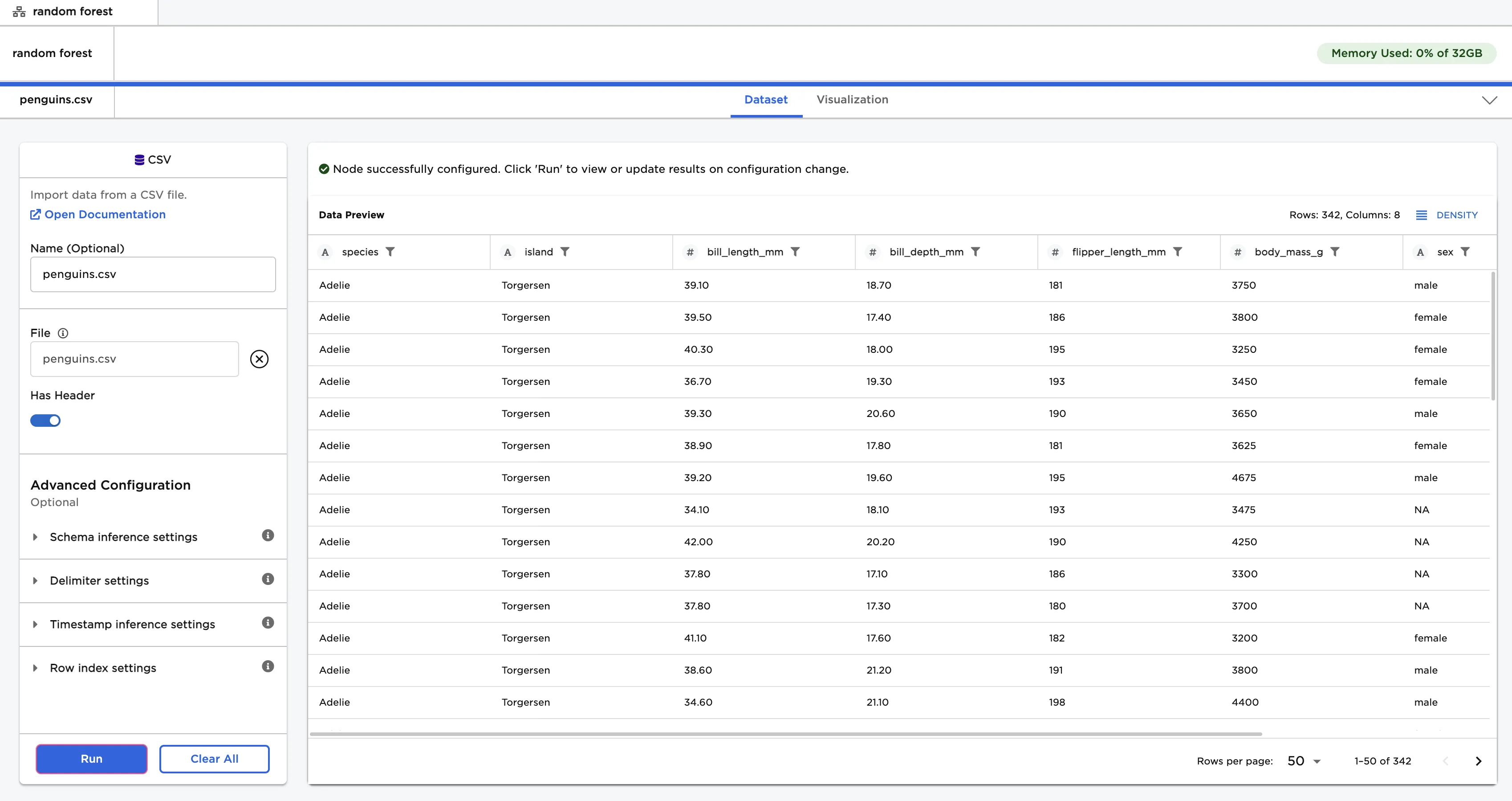

The dataframe shown in Figure 2 contains identifying characteristics of three species of penguin. This data is used to train a model that can identify the species of penguin based on the given data. This is a classification problem because the data can be grouped into different categories based on a specified label column. To learn more about classification, see the C3 AI Glossary.

Figure 2: Example input data

Follow the steps below to train a model that can identify penguin species given the input data.

- Connect a Random Forest Classification node to an existing node.

- Select species (String) for the Select Column with Labels field. The model predicts the values in this column after training.

- Select Train to train models with the default settings.

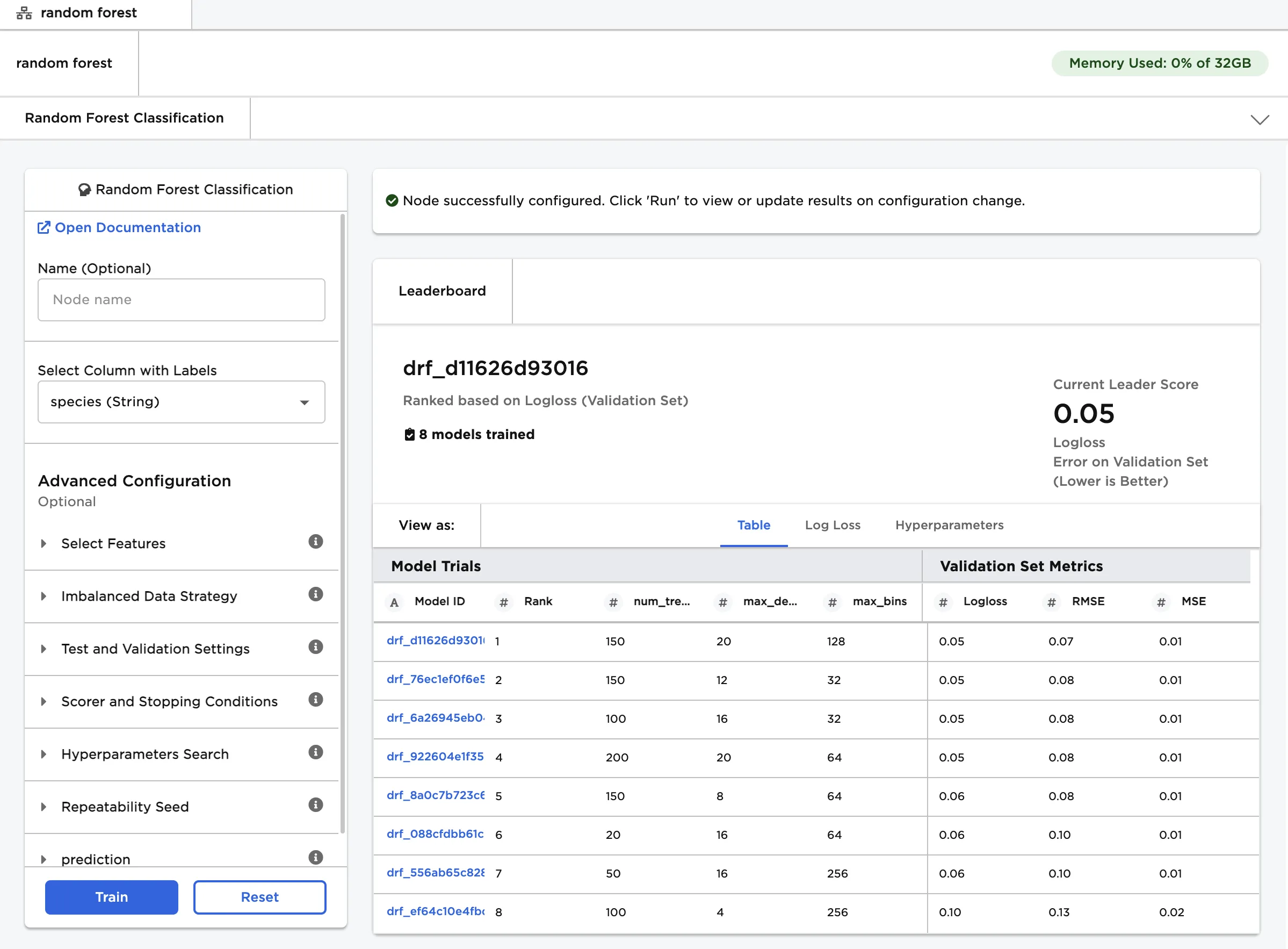

Notice that Ex Machina trains multiple models, each with different hyperparameter configurations. All trained models are displayed on a leaderboard and ranked by performance using the Logloss function. Logloss, also known as cross-entropy loss, is a commonly used loss function for classification models. Models with lower logloss values offer better predictions.

Figure 3: Model leaderboard

Follow the steps below to learn more about a specific model on the leaderboard.

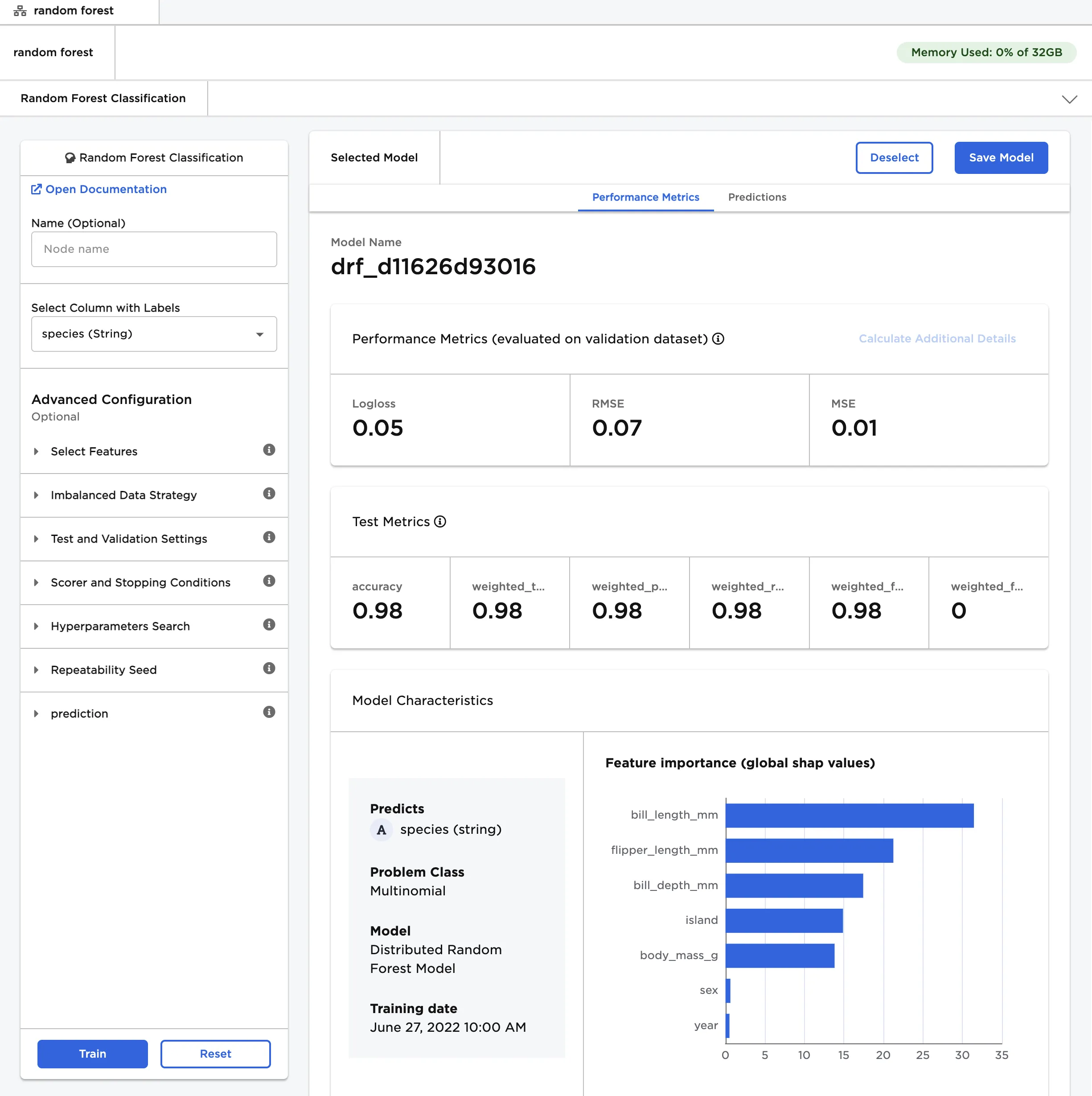

- Select a model, then scroll down to view information about the model and a bar chart with the importance of each feature.

- Select Calculate Additional Details to view additional test metrics and a confusion matrix. The button appears dimmed after it has been selected. For more information about test metrics, see the C3 AI Ex Machina User Guide.

The model selected in Figure 4 determined that bill length is the most important characteristic when categorizing penguins by species.

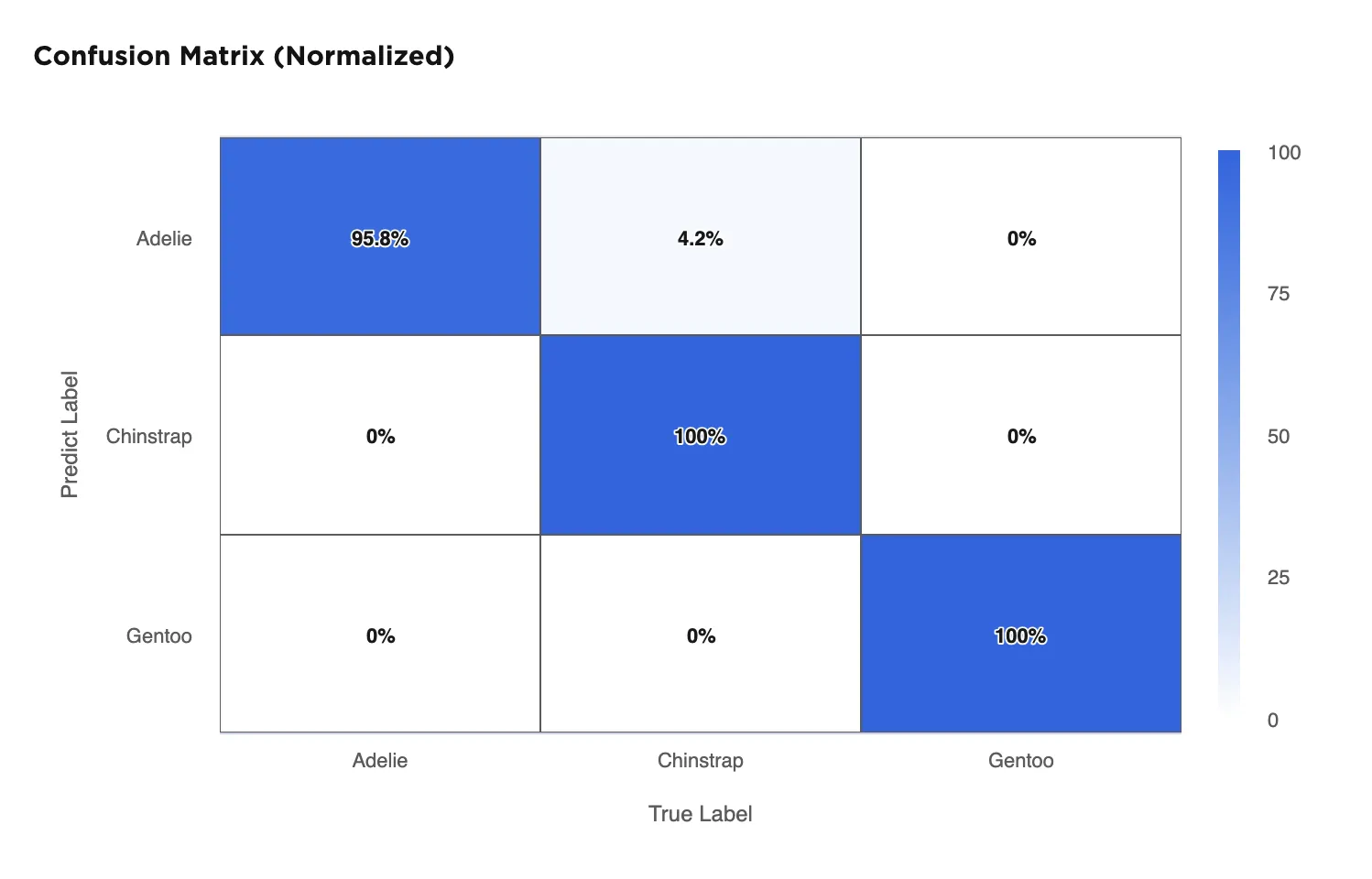

The confusion matrix shows that the selected model correctly identifies all Chinstrap and Gentoo penguins in the data, but only correctly identifies 95.8% of the Adelie penguins. It mistakenly categorizes 4.2% of Adelie penguins as Chinstrap penguins. A confusion matrix with a diagonal row of "100%" values from the top left to the bottom right indicates a perfect model.

Figure 4: Model details

Figure 5: Confusion matrix

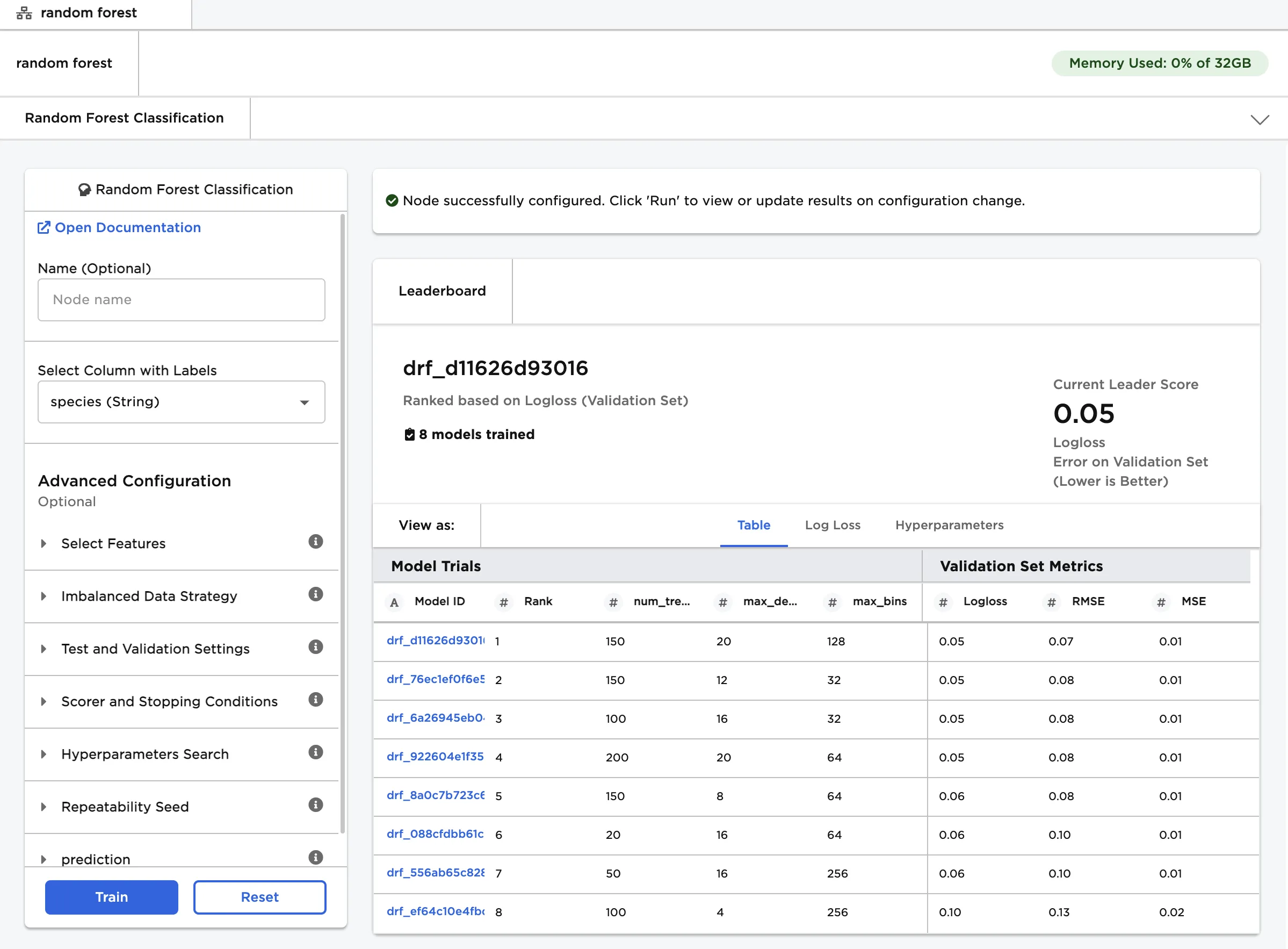

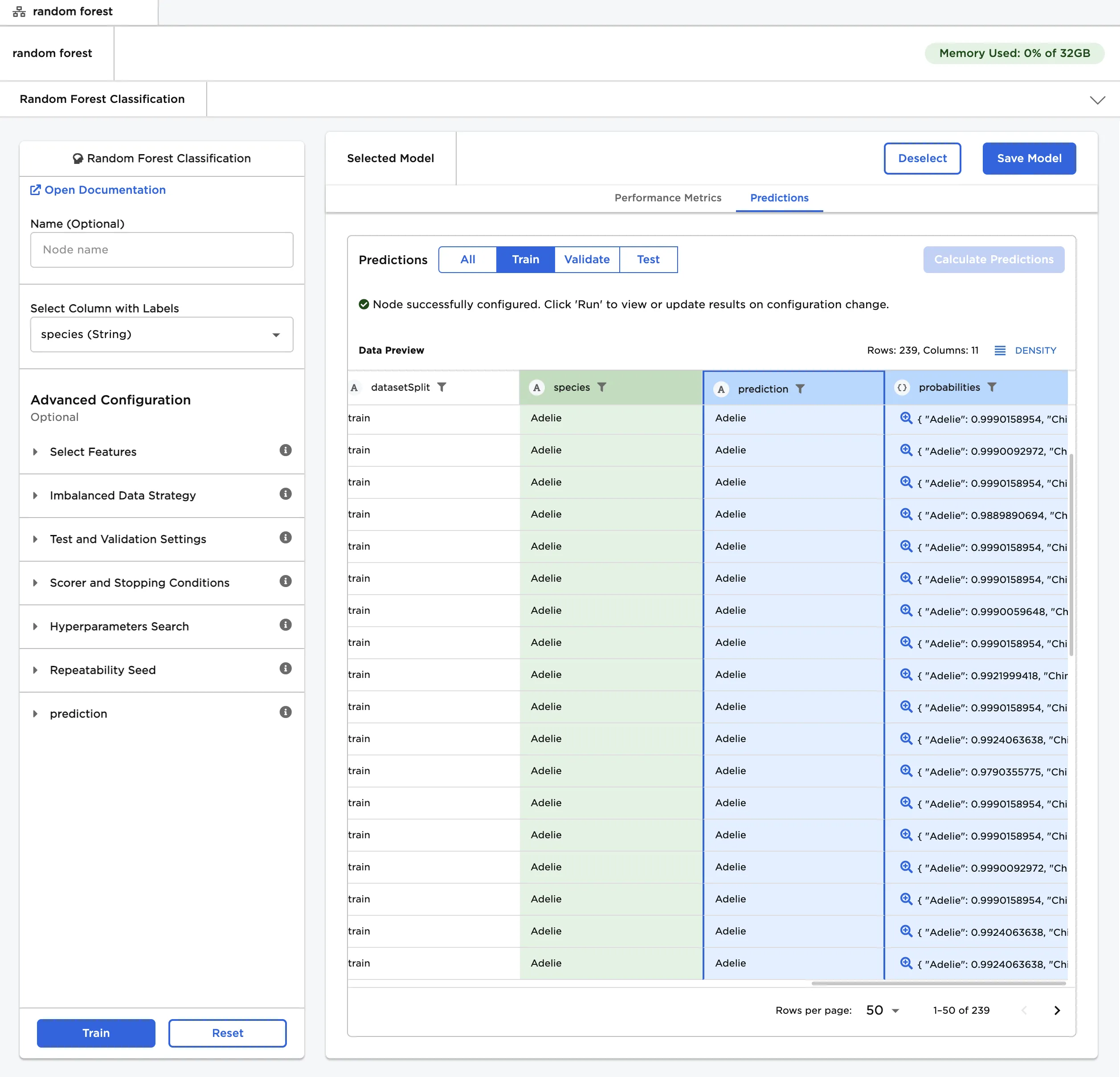

Follow the steps below to view the model's predictions.

- After you select a model, navigate to the Predictions tab.

- Select Calculate Predictions to view the selected model's predictions on the training data. The button appears dimmed after it has been selected.

If the leading model doesn't perform as well as you'd like it to, try altering the advanced configuration options and training new models.

Note that if your model correctly predicts all values, it might be overfit. In other words, the model may be too closely aligned to the training data that it is incapable of making accurate predictions on unseen data. Try altering the hyperparameters or using a different AutoML node.

Figure 6: The selected model's predictions